So much of the drift has just been marketing. Rebranding a Markov Chain stapled onto a particularly large graph as Master Computer from Tron.

Programmer Humor

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

Fr I was about to say the same thing. Aside from better hardware and more layers the technology really hasn't changed from this level to begin with.

We've learned a little about what emergent behavior and trends look like in machine learning algorithms when graphed, though: it becomes more and more convergent, as if it forms its own little confirmation bias it will produce more and more samey results.

Rebranding a Markov Chain stapled onto a particularly large graph

Could you elaborate how this applies to various areas of AI in your opinion?

Several models are non-markovian. Then there are also a lot of models and algorithms, where the description as or even comparison to Markov-chains would be incorrect and not suitable.

I'm not sure what people think AI was ever going to be... every time something new comes out it's always dismissed because "it's basically just a X that does Y". I think that will continue to be the case until there is some literal connection to actual brains, in which case the concept of what a brain is will probably be questioned as well.

I’m not sure what people think AI was ever going to be…

The heavy investment in AI is coming under the assumption that these advanced processes will replace huge portions of the human workforce.

So we don't need lawyers, because we just put prompts into a Law AI and it gives us a verdict. We don't need doctors, because we just put symptoms into a Medical AI and it gives us a diagnosis and treatment plan. We don't need salespeople, because we just put the product into a Marketing AI and it spits out a bunch of comvincing ad copy.

the concept of what a brain is will probably be questioned as well.

We already connect our brains to our computers. We just use screens and keyboards as our interface.

I suppose you could argue that a guy with a calculator or a camera or a chat app is mentally different than one without it. But I think the goal with AI is supplementing human minds, not complementing then.

Sorry for the potato photograph, my phone was a potato, I was a student after all

Our phones back then were actual potatoes and we wore them next to the turnip on our belt, as was the fashion back then.

That was before the dicketies.

Looks more like an egg to me

As a kid I used to check out books from the library that had little BASIC games you could transcribe into your PC. Times have certainly changed.

I'm literally on an internship training course where the Exercises Left For The Readers are implementing Number Guessing Games on the various technologies talked about on the course. I'm like "thanks, but I read about this particular exercise extensively the BASIC age. I'm not going to redo these things unless your training material will have little cartoon robots. Like, you know, in the Usborne books or something."

Why would an internship train you on an antiquated language that hasn't been used in decades?

Well they train me in JavaScript frameworks and such. I allege this knowledge will be useless in a few decades. Or even less so, based on my meagre knowledge so far.

JavaScript is used on virtually every website on the planet today though. BASIC hasn't been used for anything in like 40 years.

20-ish, rather. You can still find some legacy systems running some version of VisualBasic on Windows.

Also disagree with op, javascript is the current "lingua franca" of programming. Unless every browser decides to allow scripting in a less shotgun-your-foot language, javascript will remain widely used.

Unless every browser decides to allow scripting in a less shotgun-your-foot language, javascript will remain widely used.

It's called web assembly, and all the major browsers (Safari, Chrome, Firefox, Edge) now support it. That's not to say that Javascript is going to disappear, but other languages might take over much of its marketshare.

Even wasm relies on javascript and frankly, I see its existence as a failure of many layers. Machine code -> Operating system -> Browser -> WASM (emulated machine code)

Similar with the computer magazines, before they started coming with floppy disks.

me proudly showing off to my dad that I had spent hours teaching the Timex Sinclair to... balance a checkbook!

dad: my checkbook is already balanced.

me: ahhh, yes well, just imagine though if things had lined up huh??

You can tell just from the font that this book is from the 80s

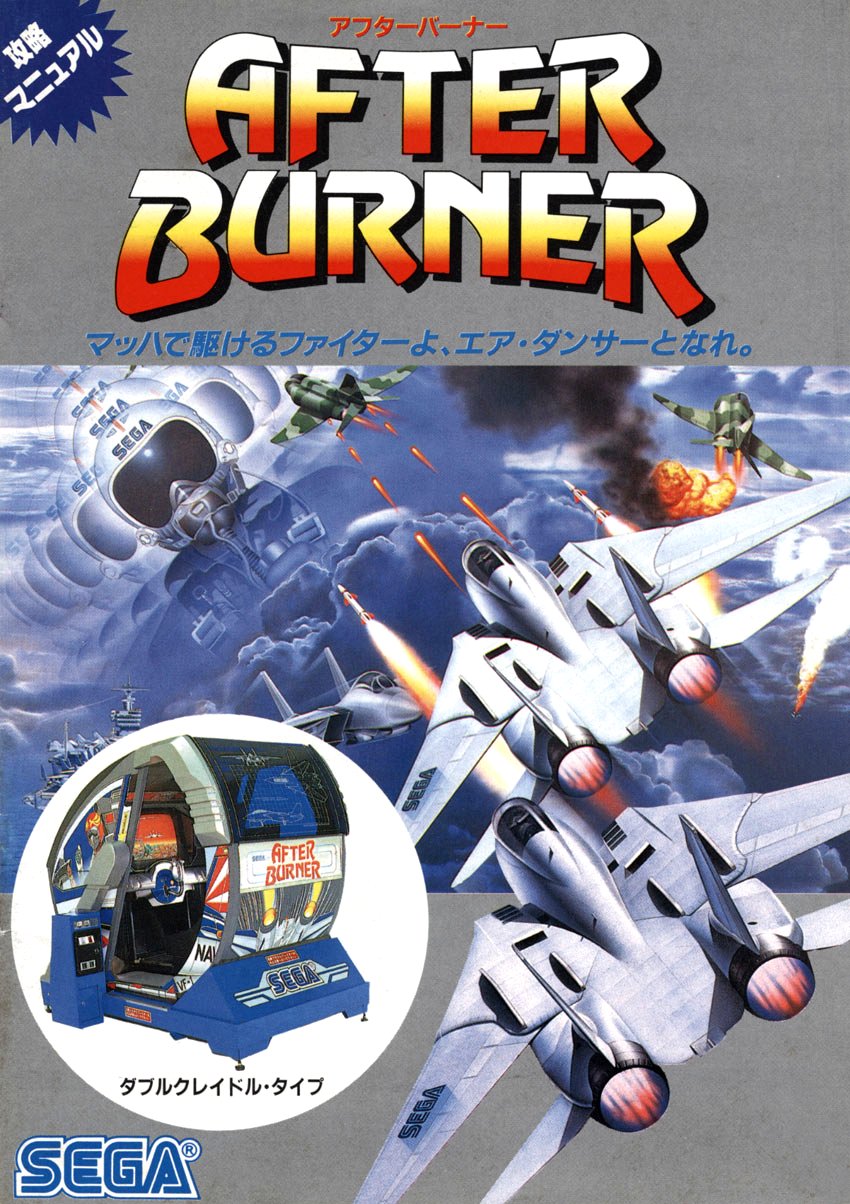

The font is Revue! People often say that their first love-hate font was Comic Sans - well, this was the first font I thought was pretty damn cool and I saw it getting run to the ground with overuse in early 1990s. It was pretty much in half of the ads in early 1990s. (My theory: It was bundled with a popular graphic design passion package / clipart bundle, Arts & Letters, and everyone made their ads with it. I can't wait for the day when I finally get arsed to install Windows 3.0 environment and my copy of Arts & Letters and prove the doubters wrong)

I half expected the first comment about the font to be about The Room to be honest.

Thanks! I was racking my brain trying to think of where I knew it from, and after seeing the page that you linked I'm almost certain that it's After Burner that is causing my brain to trigger the 80s association.

Randomly stumbling upon a font nerd was not on my bingo card today. I didn't know about Revue, thanks.

Its object oriented programming. The object is to get investors $$$

Wait does it teach ai programming in BASIC?

10 dim prompt

20 input(prompt)

30 pring "Nice to meet you"+prompt

40 play g3 c4 e4

50 goto 20

Syntax error on line 30

Please insert Pringles verification can to use pring function

I'm in middle of a Rust module of a course, so I'll do some Programmer Friendly Error Messages:

Line 10: You do not need to dimension a dimensionless variable such as a standalone string variable. (This ain't Visual Basic.)

Line 20: input doesn't do parentheses, sorry

Line 20: Input accepts a string: Perhaps you meant prompt$?

Line 30: Concatenation is too modern, perhaps instead of + you meant ; just saying?

Line 40: Invalid syntax with play, maybe you meant play "g3c4e4"?

Oh my! Haha all these modern languages poisoned my memory

What does pring do?

Fun fact, the Perceptron is basically the first machine learning AI, and it was invented in 1943. It took a long time and many advancements in hardware before it became recognizable as the AI of today, but it's hardly a new idea.

People only discovered that multi-layer non-linear neural networks work at the 90s. It's not really reasonable to equate perceptrons with the stuff people use today.

Obviously there have been major improvements over the past 80 years, but that's still considered the first neural network. The need for multi-layer neural networks was recognized by 1969, but the knowledge of how to do that took awhile to be worked out.

GOTO line 987,897,544,910

Not longer in structured basic fortunately.

you could say it was very basic AI

I ran ELIZA on a Timex/Sinclair 2068.

Why do you think you wanted to run ELIZA on a Timex/Sinclair 2068?

Tell me about "Why do you think you wanted to run ELIXA on a Times/Sinclair 2968?".

I remember that book! Wasn't it basically, how to make your own Eliza with a bunch of If...Then's?

It's way more exhaustive actually ! https://books.google.fr/books?id=7mWeBQAAQBAJ&pg=PA97&hl=fr&source=gbs_selected_pages&cad=1#v=onepage&q&f=false

oh god, you're making me think of the difference between a procedurally generated level and a randomly generated one.

The fucking generative AIs don't even have anything to stop it spewing crap

wave function collapse is a vastly superior procedural generation technique to generative pretrained transformer

Same for enemy AI, for which I'm planning to implement some fuzzy logic support into my game engine, if there's no solution for it already in some D library.

nah ai is great.

My lukewarm take is that all this hate on AI is really just hating on big companies trying to squeeze money out of some new development. AI (or rather LLMs) are not the problem.

I personally like AI in its current state, still goofy and not a valid replacement for people. I definitely don't like that all of these corporations are justifying their billions in spending by forcing it into any and every app they can think of!

Why do I need Gemini to suggest a list of items I'm gonna need in my notes app that I likely opened to create a list of things I knew I already needed?

Same. Wished it had just stayed as an idea. Wished it had stayed as just a concept to be used in movies, games, shows and books. Wished it had just stayed in it's boundaries.