this post was submitted on 27 Nov 2024

214 points (94.2% liked)

Firefox

19457 readers

139 users here now

A place to discuss the news and latest developments on the open-source browser Firefox

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

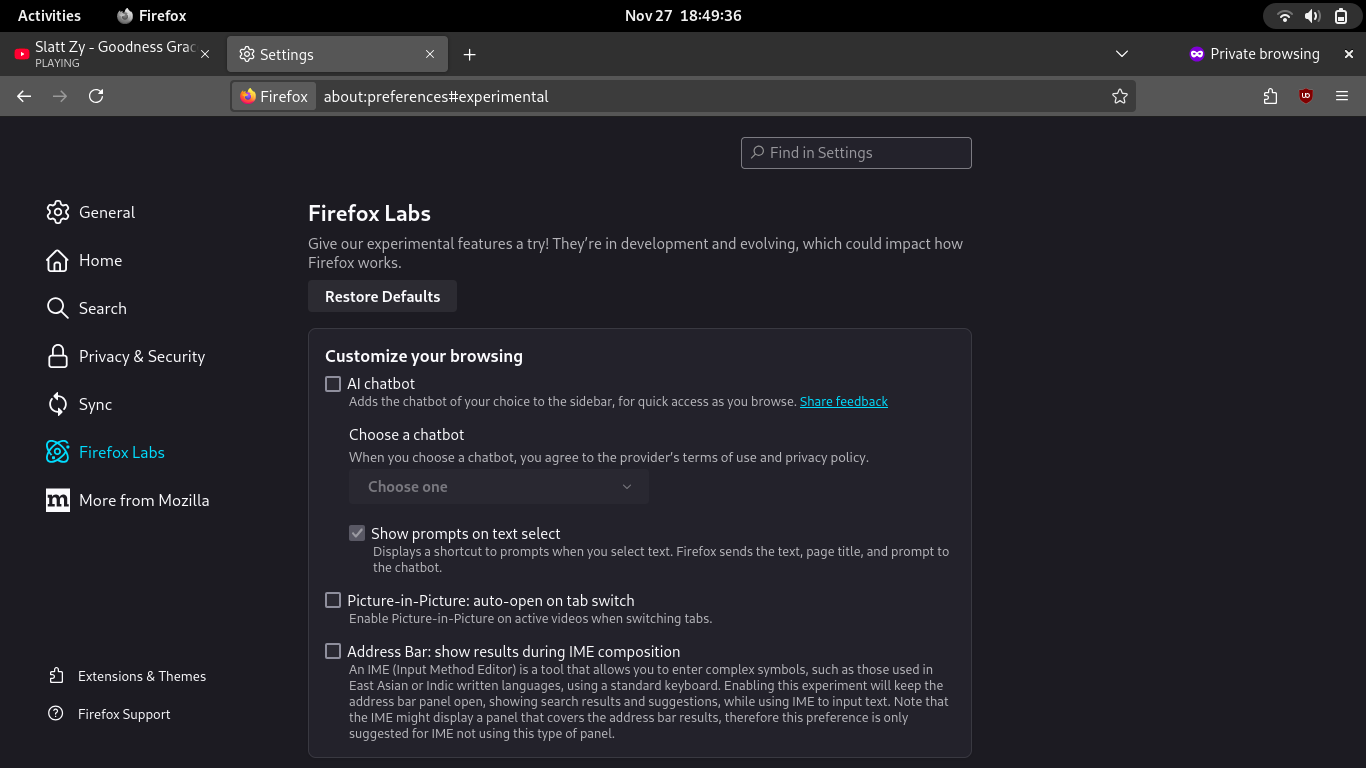

It is a sidebar that sends a query from your browser directly to a server run by a giant corporation like Google or OpenAI, consumes an excessive amount of carbon/water, then sends a response back to you that may or may not be true (because AI is incapable of doing anything but generating what it thinks you want to see).

Not only is it unethical in my opinion, it's also ridiculously rudimentary...

It gives you many options on what to use, you can use Llama which is offline. Needs to be enabled though about:config > browser.ml.chat.hideLocalhost.

and thus is unavailable to anyone who isn't a power user, as they will never see a comment like this and about:config would fill them with dread

Lol, that is certainly true and you would need to also set it up manually which even power users might not be able to do. Thankfully there is an easy to follow guide here: https://ai-guide.future.mozilla.org/content/running-llms-locally/.

There's a huge difference between something that is presented in an easily accessible settings menu, and something that requires you to go to an esoteric page, click through a scary warning message, and then search for esoteric settings... Before even installing a server.

Nothing was compelling Mozilla to rush this through. In addition, nobody was asking Mozilla for remote access to AI, AFAIK. Before Mozilla pushed for it, people were praising them for resisting the temptation to follow the flock. They could have waited and provided better defaults.

Or just wedged it into an extension, something they're currently doing anyway.