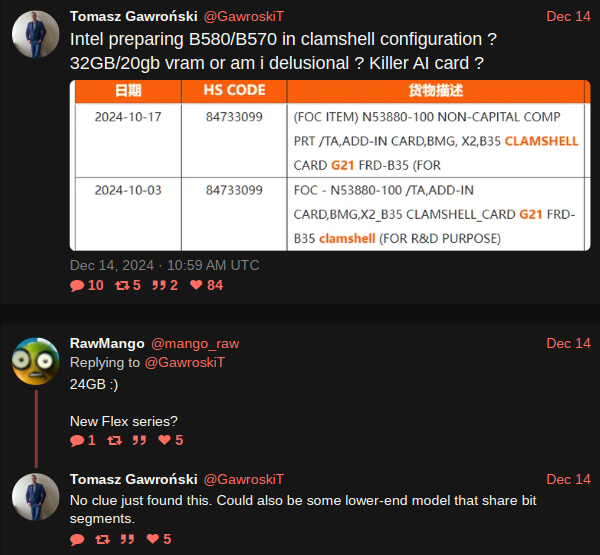

All GDDR6 modules, be they from Samsung, Micron, or SK Hynix, have a data bus that's 32 bits wide. However, the bus can be used in a 16-bit mode—the entire contents of the RAM are still accessible, just with less peak bandwidth for data transfers. Since the memory controllers in the Arc B580 are 32 bits wide, two GDDR6 modules can be wired to each controller, aka clamshell mode.

With six controllers in total, Intel's largest Battlemage GPU (to date, at least) has an aggregated memory bus of 192 bits and normally comes with 12 GB of GDDR6. Wired in clamshell mode, the total VRAM now becomes 24 GB.

We may never see a 24 GB Arc B580 in the wild, as Intel may just keep them for AI/data centre partners like HP and Lenovo, but you never know.

Well, it would be a cool card if it's actually released. Could also be a way for Intel to "break into the GPU segment" combined with their AI tools:

They’re starting to release tools to use Intel ARC for AI tasks, such as AI Playground and IPEX LLM:

https://game.intel.com/us/stories/introducing-ai-playground/

https://www.intel.com/content/www/us/en/products/docs/discrete-gpus/arc/software/ai-playground.htmlhttps://game.intel.com/us/stories/wield-the-power-of-llms-on-intel-arc-gpus/

https://github.com/intel-analytics/ipex-llm